Hello folks,

This is part one of a three part blog series where we will be diving deep in to Internet edge design and architecture. There are three primary Internet edge designs we recommend at Archous Networks: Disaggregated, Layer 3, and Layer 2 + Layer 3. For today’s post, we will be focusing on “Disaggregated networking”, or “whitebox” solutions.

Design Overview and Definitions

Before we go in-depth with the discussion on the design, let’s define some key terms for the reader’s knowledge:

Control Plane – Function of a router responsible for “finding” paths to destination networks. Routing protocols interact directly with the control plane by contributing candidate routes via the routing information base (RIB) which eventually make it to the forwarding information base (FIB).

FIB – Forwarding Information Base. This is the (best) active path used by the data plane and is chosen for a given destination network. The FIB usually contains adjacency information which is the outgoing interface to reach the destination network and necessary information needed to build the layer 2 header for the packet leaving the interface.

RIB – Routing Information Base. The cumulative paths or “routes” available to a router from various routing protocols. These may not be the “best” or “active” paths but they are the available ones.

Data Plane – The forwarding path chosen by a network switch or router to get to a given destination. The data plane is the path that actual network bits flow over.

Disaggregated Networking – The concept of separating hardware and software functions of network hardware. Traditional network hardware has software and hardware combined on the same physical appliance. Disaggregated networking opens the door for scaling opportunities by separating those functions out allowing you to use commodity server hardware for providing software functions such as routing protocols.

NFV – Network Function Virtualization. The actual process of taking a function that is typically used on physical hardware and collapsing it in to a virtual machine. An example of this is running a routing protocol on a virtual machine instead of natively in hardware.

Internet Tunnel – A concept of establishing a point to point extension of the data plane deeper in to layers of the network via tunnels such as IPIP, GRE, and VXLAN.

Commodity Switch – An open networking switch using off the shelf chipsets such as Broadcom, Nvidia, or Marvell. Usually the switch is loaded with an open networking OS and has both chipset level and Linux kernel level access for building a highly customized switch stack.

Internet Edge/External ASNs – A term used to reference the part of the network responsible for connecting to external transit, peering, and Internet Exchange BGP peers.

Now, let’s deep dive into our take on the high level architecture of a disaggregated switching solution for Internet Edge use cases.

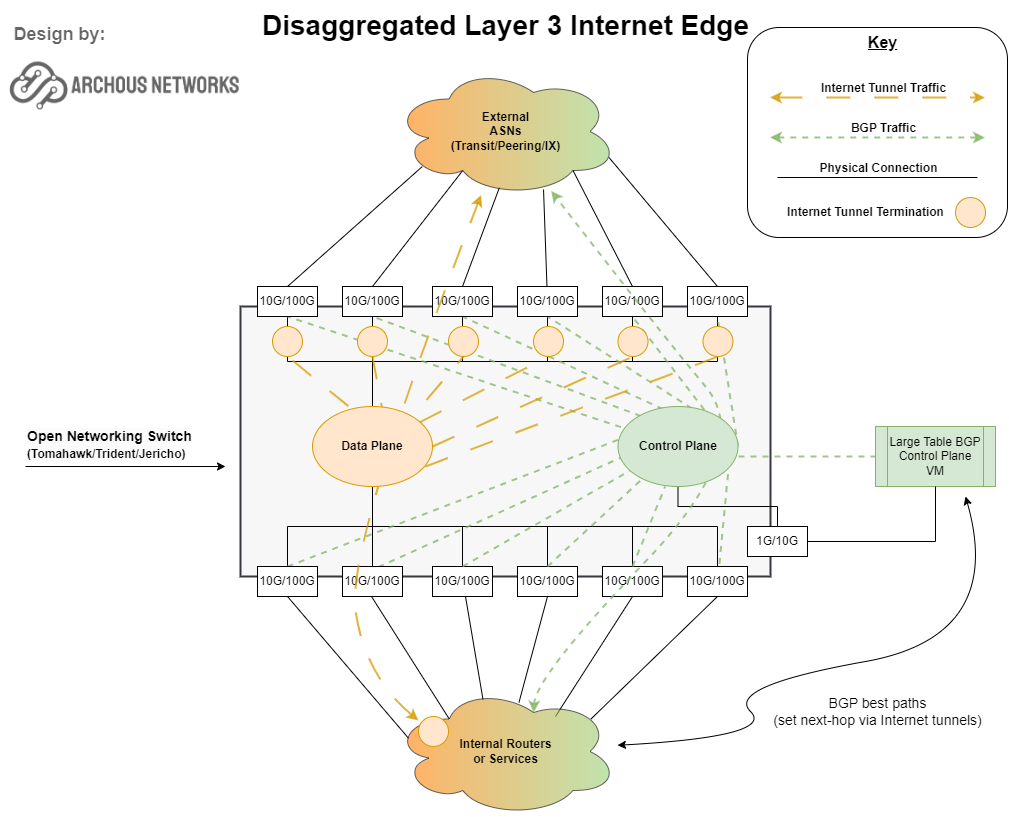

Consider the diagram below:

This diagram shows a view of a single commodity switch with logical constructs of the data plane (orange) and the control plane (green) as well as physical port connectivity. As you can see, internally we break the control plane and data plane up (just like with any off the shelf router) but the control plane function is actually extended in to virtual machine(s) on the right of the diagram.

The control plane is disaggregated from the hardware resources of the switch so we no longer are bound by the scaling limitations of the CPU and memory that is physically onboard the switch. Essentially CPU and memory, which are the primary resources consumed by the BGP routing protocol, are now “commoditized” in to the virtual machine where we can throw as much memory and CPU as the VM host will allow.

The data plane of the switch is also different than your typical vendor. In this design, the data plane for each external peer is actually tied to Internet Tunnels. Each external peer has a one to many tunnel relationship mapping of the data plane. This effectively means that you can extend the data plane from any given internal router, switch, or even an end-host via tunnels directly to any external ASN.

Use Case in Action

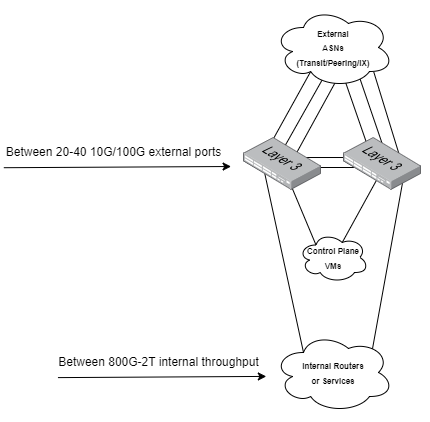

As an example, let’s look at an Edge-Core AS7712-32X (DCS501):

This open networking switch contains 32 100G QSFP28 ports which can operate in 100G native mode, 40G native mode, or 4x10G breakout mode. There’s one catch-22 though: the CPU is an Intel® Atom® C2538 4-Core @ 2.4 GHz. The datasheet states it can support 80k-100k of IPv4+IPv6 routes. By the time you take out CPU cycles used by various other daemons on the box, that’s not really a lot of horsepower left over for use with crunching route convergence scenarios. This is where disaggregation and NFV enters the room.

By going the disaggregated route, operators can deploy redundant virtualization hosts such as a pair of Dell VEP4600 or R-series servers to provide much higher (dedicated) CPU cycles for routing protocol convergence and high quantities of memory for storing multiple full transit BGP tables, large peering BGP tables, and Internet Exchange BGP tables. In fact, these virtual hosts can even be made redundant and used for other network functions such as route reflectors and DHCP/NTP/DNS servers.

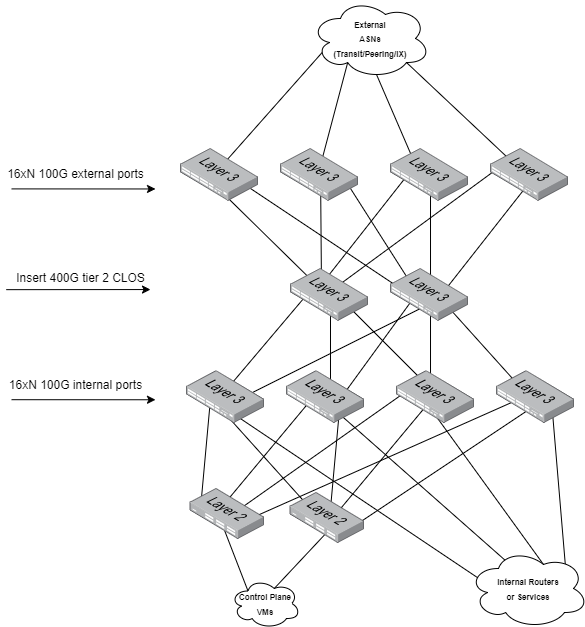

Building a Fabric and Scaling Up

An initial minimum deployment using our example AS7712-32X switches can yield between 800G and 2T of internal throughput (varies depending on external port configuration).

As the network grows past the initial deployment, a 400G tier of spine switches can be introduced and a spine-leaf CLOS topology is built allowing the operator to scale to Nx16 ports where N represents the quantity of internal and external AS7712-32X switches added to the fabric. The deep dive associated with growth at this level are outside the scope of this post but know that as long as you have rack space and power, the port scaling capabilities are extraordinary.

Budgeting & Cost Per Port

When built properly, disaggregated networks have the absolute lowest cost per port compared to typical vendor-based solutions at the cost of a slightly larger initial footprint and a moderate cost of entry. For a minimum deployment we strongly suggest at least two switches plus a pair of route lookup virtual host servers.

For a 32 port 100G Trident 3 box, the price point is around $6,500-$8,000 per switch. For a 32 port 100G Tomahawk, you’re looking at around $9,500-$11,000. Some specifics on brands that can be leveraged here are FS.com switches such as the N8560-32C, N8550-32C, and N5860-48SC. There are other of perfectly capable switches as well such as Dell S5232F and Edge-Core networks AS7712-32X. The appropriate switch to choose depends on your desired port density/speed, availability, and whether or not you need features such as QoS (rate-limiting), and MPLS on these switches.

Virtual hosts are relatively inexpensive, especially on the refurb market. A virtual host with 128G of RAM and 16-core Xeon Silver processor could easily be obtained for $3500-$4500.

For software, there are a variety of options available. IP Fusion’s OcNOS is a great feature-rich network operating system with a Cisco-like CLI but there is a cost associated with each switch of about $1000-$1500 for a 3 year license (with support). Edge-Core switches come with an Enterprise SONiC license which is free to use. Dell S-series switches are usually bundled with Dell OS10 and a support agreement.

You must also include implementation and labor costs. With Archous’s solution we provide turnkey implementation services and with that comes our purpose-built SDN software to enable the control plane and data plane disaggregation. This “secret sauce” disaggregation functionality operates at the kernel level and is not available as part of the CLI of any known commercially available network operating systems.

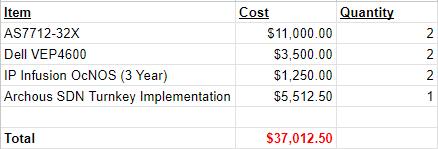

An example summary of initial hardware costs are shown below. Using the example, day 1 costs come out to around $500/per 100G port.

The Archous Recommendation

Our typical hardware recommendation for service providers looking to scale at this level are Edge-Core switches, Dell virtual host platforms, and if needed OcNOS as a network operating system. Keep in mind this solution isn’t a fit for all operators as the intended use case is for building a highly customizable Internet Edge within large scale ISP or datacenter networks.

If you are interested in this solution or open networking in general, feel free to leave a comment here or reach out to us using our various contact methods:

1-866-535-0358

support@archous.tech

support.archous.tech

…or maybe you’re looking for Internet Edge and other ISP core as a service? We can definitely help!